🔒 The Terminal Telephone Game: How AI is Distorting Our Reality

So, I’m running into an increasingly concerning research problem.

Last week, I wrote a piece that took considerably more research than usual, because it’s a topic I’m not great at: Hard science. It’s about why human beings are never going to colonize other planets. I understood the topic that I was writing about, but I really wanted to make sure I got the numbers and the science right, lest I look a fool and have to make a bunch of embarrassing corrections when people smarter than myself descend on the comments.

Searching through YouTube, I stumbled on this video by the renowned physicist Leonard Susskind, which seemed to be a slam dunk for what I was looking for. After watching for a couple of minutes, I began to realize something was off about it.

If you’re trying to pay attention to the lecture then the oddities aren’t immediately obvious, but once you notice it then there’s no going back: Susskind’s weird jerky head movements, like he has some sort of tic. The strange hand gesture he keeps making over and over again. This is an AI deepfake.

This took me down a rabbit hole. At first I figured it was no big deal that someone had taken one of Susskind’s lectures and animated him over the top of it, but then I began to notice a strange evenness in his voice which made me suspect that the audio is also artificially generated. There is no information in the description about where this was sourced from. As an imitation, it’s an amazing rendering of his voice. Besides the uncanny valley evenness of it, it sounds pretty much exactly like him. Compare it with a definitely real lecture recording from Stanford:

The inevitable conclusion is that I have no reason to believe the script itself was not also written by AI, and that Susskind has absolutely nothing to do with any of this. For one thing, there’s the uncharacteristically YouTube-influencery “smash that like button” thing that he does five minutes into the video.

Then there’s the fact that the channel is crowded with Susskind videos, many of which cover the same information reworded. Here’s another video from the same channel that pretty much re-explains the topic in the video I came across. In this one, both the visual and audio are much more obviously AI-generated.

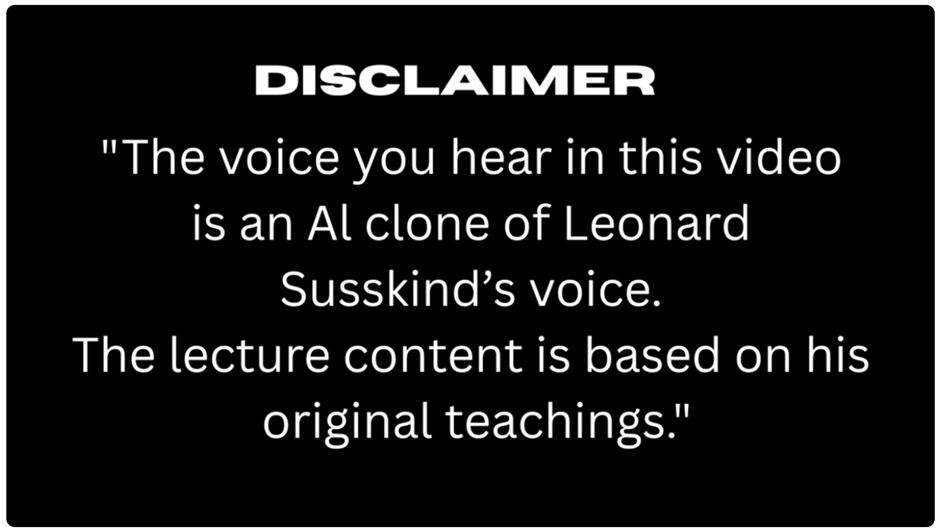

Finally I got to the bottom of it: Some of these videos carry a disclaimer in one single frame, for one fraction of a second. It is impossible to read it unless you pause and navigate to that specific frame: The content is “based on” his teachings.

If I had just listened to this instead of watching it, seeing Pseudo-Susskind’s robotic gestures, then none of this might have occurred to me, and I might have taken it as authoritative.

I wound up finding enough human sources to back up what I wanted to write about, and the content of the video turns out to be, at least as far as I can tell, pretty correct. But, even if it’s right, I can’t use this as a source. Not any more than I could use Wikipedia as a source (please don’t interpret that as criticism of Wikipedia, which I believe is the most important website on the internet and a phenomenal research tool. It’s just not a primary source.)

What I do worry about is the number of people out there, churning out articles, who might be less obsessed with their information hygiene. Or, that AI will soon get so good at human mimicry that information hygiene will become insurmountably difficult.

In AI parlance, there’s a concept termed “recursive degradation” that refers to what happens when you train AI models on AI-generated content. What you get, essentially, is a photocopy of a photocopy. The result isn’t a refinement like you get from recursive feedback in other areas of computer science. Recursion in AI models leads to a situation that one academic has cleverly termed “Habsburg AI.” If you don’t get the reference, the Habsburgs were a dynasty of medieval aristocrats and monarchs who intermarried with their first cousins so often that they all started looking like this:

Large language models can’t do original research. Despite the laughable claims of Elon Musk that Grok is going to be able to produce “original physics discoveries” if he only launches enough data centers into space, Grok or Claude or ChatGPT can never be Leonard Susskind, because all they can do is harvest words from the internet and say them back to you in somewhat of a different order.

Errors happen. Errors compound like bad genes. In the AI world, errors are called “hallucinations.” In our world, rapidly compounding hallucinations are called “psychosis.”

For free subscribers, this is a preview. Free subscribers get access to this article on Friday 10-April