The Terminal Telephone Game: How AI is Distorting Our Reality

So, I’m running into an increasingly concerning research problem.

Last week, I wrote a piece that took considerably more research than usual, because it’s a topic I’m not great at: Hard science. It’s about why human beings are never going to colonize other planets. I understood the topic that I was writing about, but I really wanted to make sure I got the numbers and the science right, lest I look a fool and have to make a bunch of embarrassing corrections when people smarter than myself descend on the comments.

Searching through YouTube, I stumbled on this video by the renowned physicist Leonard Susskind, which seemed to be a slam dunk for what I was looking for. After watching for a couple of minutes, I began to realize something was off about it.

If you’re trying to pay attention to the lecture then the oddities aren’t immediately obvious, but once you notice it then there’s no going back: Susskind’s weird jerky head movements, like he has some sort of tic. The strange hand gesture he keeps making over and over again. This is an AI deepfake.

This took me down a rabbit hole. At first I figured it was no big deal that someone had taken one of Susskind’s lectures and animated him over the top of it, but then I began to notice a strange evenness in his voice which made me suspect that the audio is also artificially generated. There is no information in the description about where this was sourced from. As an imitation, it’s an amazing rendering of his voice. Besides the uncanny valley evenness of it, it sounds pretty much exactly like him. Compare it with a definitely real lecture recording from Stanford:

The inevitable conclusion is that I have no reason to believe the script itself was not also written by AI, and that Susskind has absolutely nothing to do with any of this. For one thing, there’s the uncharacteristically YouTube-influencery “smash that like button” thing that he does five minutes into the video.

Then there’s the fact that the channel is crowded with Susskind videos, many of which cover the same information reworded. Here’s another video from the same channel that pretty much re-explains the topic in the video I came across. In this one, both the visual and audio are much more obviously AI-generated.

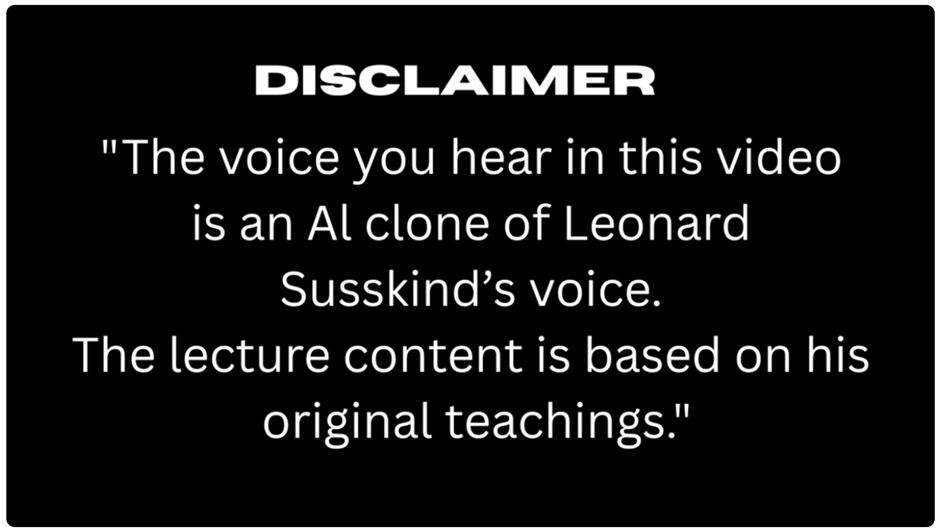

Finally I got to the bottom of it: Some of these videos carry a disclaimer in one single frame, for one fraction of a second. It is impossible to read it unless you pause and navigate to that specific frame: The content is “based on” his teachings.

If I had just listened to this instead of watching it, seeing Pseudo-Susskind’s robotic gestures, then none of this might have occurred to me, and I might have taken it as authoritative.

I wound up finding enough human sources to back up what I wanted to write about, and the content of the video turns out to be, at least as far as I can tell, pretty correct. But, even if it’s right, I can’t use this as a source. Not any more than I could use Wikipedia as a source (please don’t interpret that as criticism of Wikipedia, which I believe is the most important website on the internet and a phenomenal research tool. It’s just not a primary source.)

What I do worry about is the number of people out there, churning out articles, who might be less obsessed with their information hygiene. Or, that AI will soon get so good at human mimicry that information hygiene will become insurmountably difficult.

In AI parlance, there’s a concept termed “recursive degradation” that refers to what happens when you train AI models on AI-generated content. What you get, essentially, is a photocopy of a photocopy. The result isn’t a refinement like you get from recursive feedback in other areas of computer science. Recursion in AI models leads to a situation that one academic has cleverly termed “Habsburg AI.” If you don’t get the reference, the Habsburgs were a dynasty of medieval aristocrats and monarchs who intermarried with their first cousins so often that they all started looking like this:

Large language models can’t do original research. Despite the laughable claims of Elon Musk that Grok is going to be able to produce “original physics discoveries” if he only launches enough data centers into space, Grok or Claude or ChatGPT can never be Leonard Susskind, because all they can do is harvest words from the internet and say them back to you in somewhat of a different order.

Errors happen. Errors compound like bad genes. In the AI world, errors are called “hallucinations.” In our world, rapidly compounding hallucinations are called “psychosis.”

Here's what paid subscribers are reading right now:

AI doesn’t know truth from fiction, fact from falsehood. It doesn’t “know” anything. It has no information hygiene. It can’t weigh source credibility. Anthropic was recently busted for feeding half a million novels into Claude against the wishes of their authors, who obviously don’t want the product of their passion Frankensteined into slop-novels regurgitated onto Amazon for a quick buck.

But if Claude is the ghostwriter of Pseudo-Susskind’s YouTube series, then how do I know how much of its content came from physicists, and how much of it was invented by some young sci-fi author who used invented physics as a plot device? (And who is, in likelihood, making less money from their books than this charlatan is making from a video series that rips off their ideas to repackage them as thoughts of a masquerade of Leonard Susskind.)

The phenomenon of recursive degradation only describes a closed system of AI models themselves: It’s the accumulation of errors that occur when you feed AI-generated content back into AI. But this isn’t actually how AI works in our world, because it ignores our world in the equation.

In reality, what happens is this: Somebody uses AI to generate educational content that contains errors; Somebody like me uses that content as a source to create educational content that unknowingly contains errors; Lots of people believe this erroneous educational content because it’s human-generated and sourced; Many of those people create their own erroneous educational content using me as a credible source; All of this content is scraped and fed back into AI, which can now reaffirm the error with much greater confidence, while introducing more errors.

Humans are the rarely-considered other side of this equation. AI-generated content has become our training data. In short: Is there any reason why recursive degradation can’t affect people?

Forget the fucking boo-hoo concerns of billionaire tech oligarchs worried about the integrity of their plagiarism bots rapidly devolving into incestuous collapse. These people are actively and ferociously engaged in a project to make their illusions literally indistinguishable from reality; to correct the facial tics and linguistic stumbles that gave the game away about Pseudo-Susskind. But they do not seek to correct AI’s propensity to produce errors, because they can’t.

What challenges are we going to face as a society when AI slop becomes so realistic that we cannot determine whether a statement comes from a human expert or from a jumble of words assorted into grammatical accuracy by a digital homunculus wearing an expert’s face?

In 2026, we have just entered the Habsburg zone: As of last year, most of the text generated on this planet is generated by AI. Let that sink in: Less than half of the words written for the past 12 months were written by people. Congratulations, we did it, I guess! Now every new essay you read or listen to that was written from this year onward is a coin toss, if you’re not exactly certain who wrote it.

I started noticing the worrying effect that AI had on research a few weeks ago. The most recent chapter of my book involved writing at length about a topic I knew absolutely nothing about—the evolution of psychotherapy—and I wasn’t quite sure how to come at the research. I started off Googling to find some footing. One of the first things I searched for was (don’t worry, it’s not important that you know what any of this means) whether Neuro-Linguistic Programming was directly inspired by Cognitive Behavioral Therapy.

You’re probably aware that Google, especially if you phrase your search as a question, has an AI thing that pops up and tries to answer your question for you so that you don’t visit any websites or do any real research. In this case, the AI assistant confidently told me, yes! Neuro-Linguistic Programming was directly inspired by Cognitive Behavioral Therapy.

This got me all excited because it would have really strengthened the linearity of the chapter. I wasted a lot of time trying to tease out the details of this before realizing definitively that it was not true. Google’s answer was flat-out wrong.

It says something different now, by the way, which is still inaccurate but not in the same way. It will probably say something different tomorrow. It scrapes its own search results to try to assemble an answer, but it can’t weigh its sources like we can, and even the most amateur of us is capable of deciding, to at least some extent, what is reliable. AI draws information from Quora, from Reddit, from Facebook. The wisdom of the crowd, you may think, but people increasingly post AI generated text on these websites. A photocopy of a photocopy.

How often do people just take an answer that Google AI gave them and store it in their head as a fact? How often do little inaccuracies creep into general knowledge and stick there like a flipped bit? How gradually is the AI-saturated internet turning our knowledge of reality into a telephone game?

I have tentatively used ChatGPT for research before but it is of limited use to me. I will ask it questions and it will tell me answers; I will ask it for sources and it will tell me, oh, nothing in particular but it’s generally accepted. Or, I will ask it to fact-check a dubious historical claim I read in a book, and it will give me a bunch of other “independent sources” that also cite that book as their source. It won’t tell you that because it’s not really capable of checking. Sometimes it has given me useful information and other times what it gives me feels like the runaround.

I spent nearly ten years researching things for my job, but most people don’t have that kind of experience and don’t necessarily know how to deeply interrogate the sources of their information. And you know, I’m not trying to puff myself up, here, I’m not expecting people to be master researchers. I’m not a master researcher, myself, I just know how to do it. People love AI because it makes information accessible by making complex topics easier to understand, and I don’t dispute that it does this. It gives you a lot of facts and every so often one of them is wrong. It gives you a bag of M&Ms and one of them is poisoned.

But even if you are a great researcher, you’re not going to question Leonard Susskind on matters pertaining to physics. I think that’s what bothers me the most. AI companies are actively trying to accelerate our knowledge collapse by making its poisoned information come directly from the mouths of human experts.

Why would they do that? I don’t think it’s for any truly malicious reason, I think it’s the same reason they ran the calculus and decided it was financially worthwhile to pirate half a million novels and settle with the authors out of court. It’s just because the money of a con artist is as real as anyone else’s. Whoever runs these channels probably wouldn’t be making any money running AI-generated text through an AI-generated voice simulator unless they could give the impression that it was written and spoken by Leonard Susskind. So they pay an AI company for the software to make that happen. You might scoff at how many people truly believe this is Leonard Susskind, but I think you would be surprised.

What’s important to understand is that even AI doesn’t know that this isn’t Susskind. Remember, it doesn’t know fact from fiction or truth from lies, even the lies that it, itself, generated, because it has no memories. If the person running this channel says this is Leonard Susskind, then by golly, Susskind it is. The AI will reabsorb these videos into its training data, and at some point will use its content to generate an answer to somebody’s question, and if they want to know its source, it will tell them: Leonard Susskind.

A photocopy of a photocopy.

People love adding this slop to the world. Claude’s stolen novels are used to crank out AI-generated bastardizations for a quick buck, which then flood libraries and booksellers and ultimately get fed back into AI. The technology removes the incentive or necessity to research or write. You churn something out with all of its potential errors, it makes you a few bucks, the AI company gets a cut, then it all gets fed back in.

As most of the content being added to the internet today is AI generated, we have entered the second round of the telephone game. The next phase of AI models will be trained mainly on AI-generated content. It will absorb errors, it will absorb misattributions, and it will circulate back into humanity. Then we will absorb these errors and misattributions, and we will put works out into the world, both human-written and AI-generated and a mix of the two, which contain these errors and misattributions. Then it will repeat. How long before the corpus of human knowledge is just, itself, slop?

Sounds like a question that Leonard Susskind could answer, if anyone can locate the real man to ask him.

If I can manage to accomplish it before research becomes truly impossible, I'm writing a whole book about how the internet corroded our liberal culture into a reactionary populist nightmare within a single generation. The working title is How Geeks Ate the World and if you like this newsletter then you'll probably like my book. If you're unsure, the good news is I’m going to be dropping parts of the draft into this very newsletter as the project comes along—but only for paid subscribers. So if you want to read along in real time, please consider subscribing. Otherwise I’ll be keeping you in the loop. Check it out here.

Here's what paid subscribers are reading right now: